The future of mass surveillance through the Internet of Things

Surveillance technology in the law enforcement system has long existed. However, the effectiveness of it and the unintended consequences have become a hotly debated issue. The first video surveillance system was installed in 1942 in Nazi occupied Germany in order to observe the launch of long-range guided ballistic missiles. It was created and deployed with a specific purpose or a clear target. It is undeniable that the surveillance technology - as well as its scale - has rapidly and dramatically developed since then. In 2019, there are an estimated 770 million surveillance cameras installed around the world today, according to a survey by IHS Markit.

The growing mass surveillance prompted us to rethink our relationship with the law enforcement agencies and the government, namely, the relationship between the subject being watched and the subject watching. And the surveillance appears to be even more problematic and intrusive when the breakthrough of other technologies like facial recognition might benefit those who are watching.

Surveillance technology itself means a certain extent of "exposure" from the person being watched to the person watching. Generally, it is acceptable if the police asked for security footage to identify the suspect or narrow down the search after a crime happened.

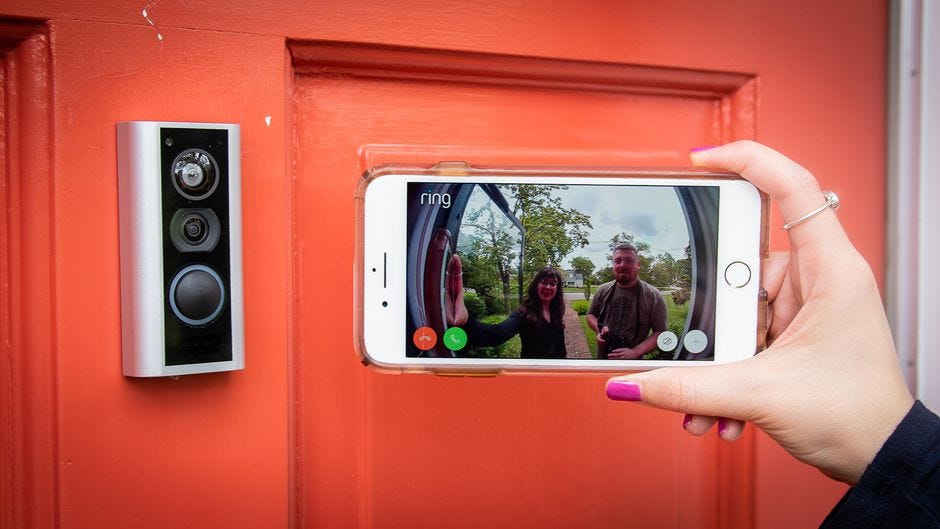

However, the security footage could be from not only security cameras but also from other self-imposed surveillance, for example, the Amazon doorbell camera purchased by homeowners to monitor their front doors daily. When police have the ability to watch the live stream of Ring doorbells from a thousand different households, it poses a threat to the privacy of the neighbors. While people tend not to expect any privacy when walking on the street in the neighborhood, giving police absolute access to all the footages on doorbells turns the intent of these doorbells from active self-defense to passive mass surveillance. The problem is not simply about the privacy concerns of those who are unconsciously recorded by the camera. It is more about the fact that the doorbell supposed to protect the neighborhood could now be used against them.

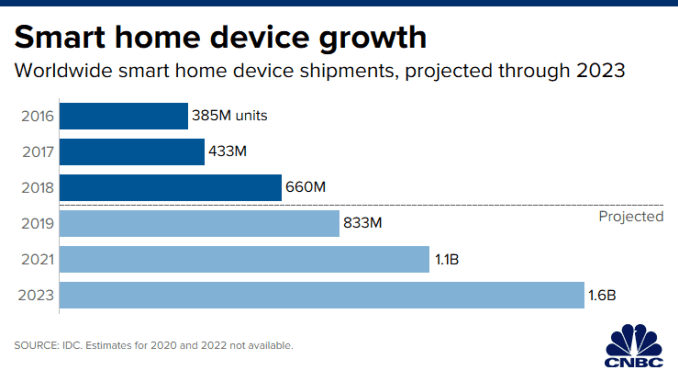

Internet of things (IoT) refers to the physical devices around the world that are connected to the internet and collecting and sharing data. The ubiquity of the Internet of things opens more side-doors for a potential invasion of privacy. Georgetown Law Professor Marc Rotenberg said in an interview with Newsweek that a market-based solution for privacy issues surrounding the Internet of things does not work because "we don't have strong advocacy for privacy within the Congress."

Privacy concerns surrounding IoT, for example, the unnoticed collection of data through smart-home devices, are becoming another hot topic and is gradually erasing people's trust that the devices earned through high-quality services.

Other Internet of Things faces a similar dilemma. For example, as Carsten Maple mentioned in "security and privacy in the internet of things," "parents who give children smart toys are either implicitly or explicitly giving consent for the data pertaining to their child to be collected, processed, stored, and transmitted. However, parents are not empowered to consent to the handling of data of their children's friend interacting with the toy. Similarly, a doorbell camera will inevitably include footage of neighbors.

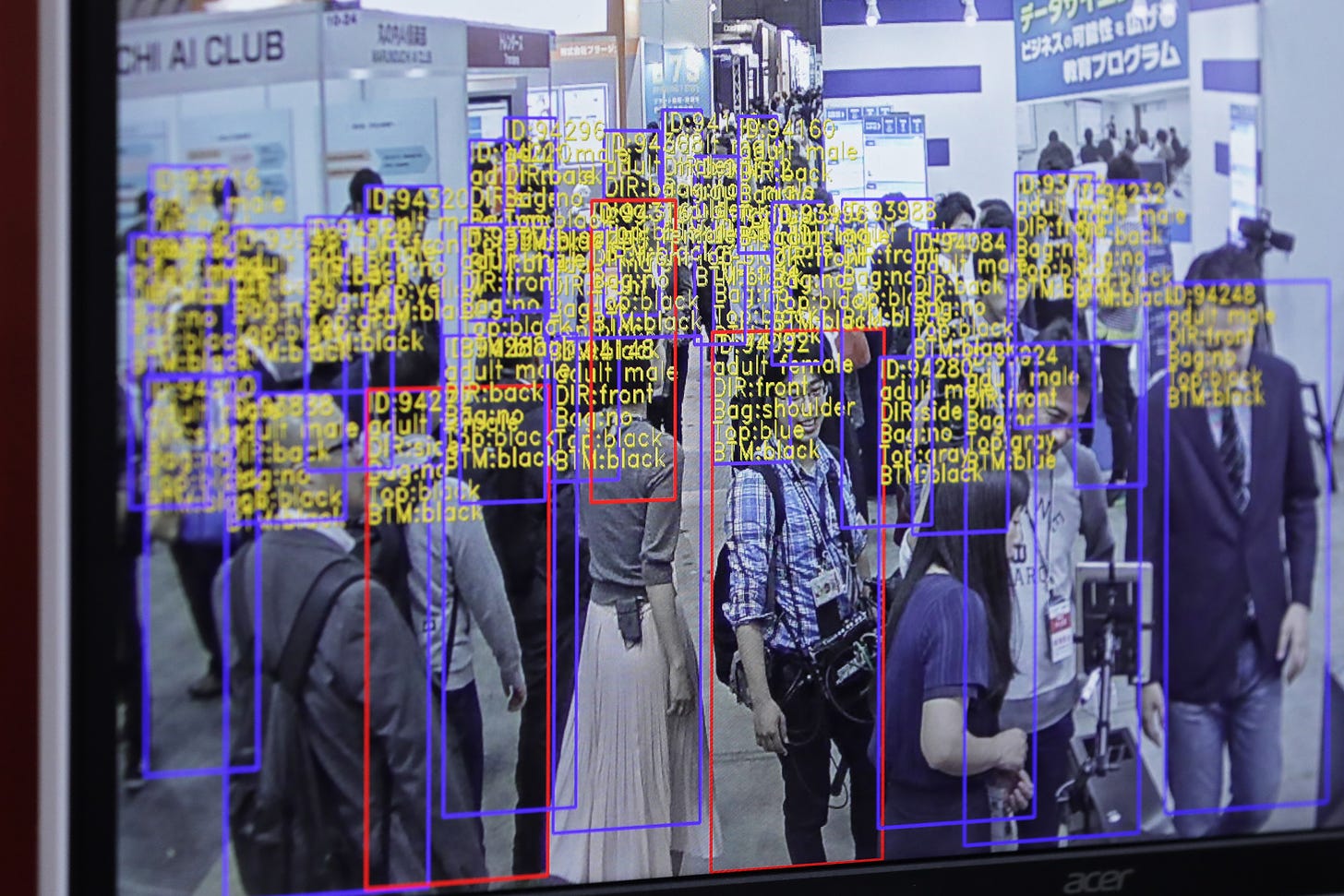

And the problem does not end with privacy concerns or security threats on the Internet of things. Mass surveillance technology itself potentially puts minorities in a worse situation. And the rise of facial recognition could make mass surveillance even more intrusive. China's facial recognition database includes nearly every one of China's 1.4 billion citizens. Shanghai-based YITU Technology has gained wide recognition for its facial scan platform that can identify a person from a database of at least 2 billion people in a matter of seconds. Facial recognition, which creates a searchable identifier of the target, forms a timeline where law enforcement agencies can simply file requests online to get comprehensive information about an individual by surveilling. And it is even scarier to imagine the results of facial recognition being livestreamed like surveillance - although China has been doing this by publicly displaying the faces of people on the blacklist detected by security cameras and disclosing personal information (redacted national ID, name, address, etc.) on the screens on the street.

The gender or racial bias might be inherent to the surveillance equipped with facial recognition technology. For example, the technology's ability to detect surfaces with different lightness. However, technical issues do not change the fact that the bias exists. No matter what caused the bias, it does create a problem depending on what the technology is used for or against. For example, false positives and negatives emerge when facial recognition is used to ban certain "trouble makers" "from entering the shopping mall, depriving basic rights for certain minority communities. However, it is worth noting that even if facial recognition resolves the issues of false positives and false negatives from a technical aspect, it still does not necessarily solve the issue of scaling, which was created by the use of the technology instead of its technical limitations. The technical insufficiency and mishandling of technology together make the future of facial recognition even more uncertain.

Moreover, when surveillance technology is implemented in policing, where entrenched institutional biases have influenced policing policy and interactions with Black and Hispanic community members, the technology itself could magnify unconscious bias. In my conversation with Ian Adam, a criminal justice researcher who focuses on body-worn cameras in policing, Adam said that our former president Obama formed a task force in 2015 to advocate for body-worn cameras as an approach to fixing the gap between community expectations of transparency and openness and policing. However, a lot of research these years has proven that the expected outcomes of this technology did not consistently appear. He pointed out that there have been "unintended consequences" of the body-worn cameras, including privacy concerns, decreasing organizational support for the police, and increasing emotional burnout of the police officer.

Meanwhile, each camera or surveillance has its subjectivity. For example, the body-worn camera is on the police's body, facing outward. It does not record what happened on the other side of the camera, thus might miss part of the narrative. The interpretation of the footage is subjective and can be used against those who need it the most.

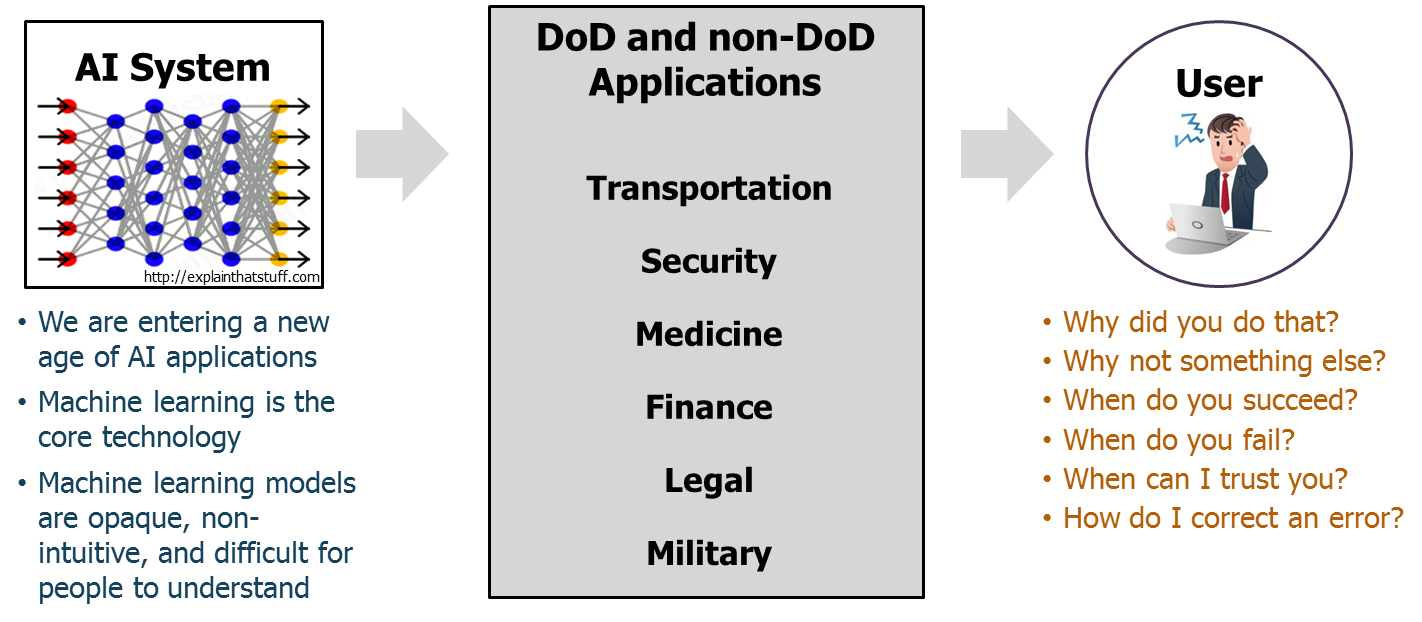

Therefore, law enforcement needs to prioritize the accurate processing and interpretation of information over efficiency. As more artificial intelligence is incorporated into mass surveillance, authorities need to make sure the program will not be subverted by the adversary by making sure the training data used in machine learning is not biased, and the decision made by the system is not based on the over correlated factors used in the training data.

Besides, when processing particularly sensitive data, the authority can experiment with "explainable AI," which allows the system to give reasoning instead of just making yes or no decisions. Therefore, the developer can take a closer examination of the decision and make further adjustments based on the system's performance, thus minimizing bias generated by the machine itself. As explained by TechTarget, expanding explainable AI is especially important in heavily regulated industries such as insurance, banking and healthcare. If an incident does happen, the humans involved need to be able to understand why and how that incident happened. However, law enforcement could also benefit from explainable AI in that the technology provides room for fixing an existing problem inherent in the computer system.

From a policy point of view, it is important for every stakeholder to realize who the technology empowers - in this case, who gets to manage and interpret the technology and what it can be used for or against. As a byproduct of mass surveillance, China's social credit system was initially built as a financial "bonus" program for those who behave well. It worked "for" the citizen. However, it now develops into a self-discipline program and is sometimes used "against" certain individuals. For example, the social credit program now places strict principles on how people rent cars, apply for loans, or purchase real estate. The social credit system sets an example for mass surveillance. Similarly, technologies like facial recognition should be made in a way that "disempowers" the government to tell the rest of the individuals who to trust or distrust.